Deeptune, the reinforcement learning startup, has raised a $43 million Series A led by Andreessen Horowitz. The round also included participation from 776, Abstract Ventures, and Inspired Capital, along with notable angel investors such as OpenAI researcher Noam Brown, Mercor CEO Brendan Foody, and Applied Compute CEO Yash Patil. The raise positions Deeptune at the center of one of the fastest-growing infrastructure categories in AI: simulated environments where agents learn to perform real-world work.

As a talent marketplace that works directly with high-growth startups like Deeptune, Fonzi has a front-row seat to how early-stage AI companies build world-class engineering teams in New York City. Here is a closer look at what Deeptune has built, why the reinforcement learning market is accelerating, and what this Series A means for the future of AI agent development.

Deeptune builds high-fidelity reinforcement learning environments that simulate the day-to-day workflows of roles like accountants, customer support representatives, and DevOps engineers. These environments allow AI agents to learn how to navigate multi-step tasks across popular workplace software such as Slack, Salesforce, and various ticketing, finance, and monitoring tools.

CEO and cofounder Tim Lupo has compared the current state of AI models to pilots who have only ever read textbooks. In his framing, Deeptune builds the flight simulators that allow those pilots to practice before they fly a real plane. The company refers to these simulated environments as "training gyms," and it has built hundreds of them for leading AI labs.

The core insight is that static training data alone is not enough to teach AI agents how to operate effectively in messy, real-world enterprise settings. Models need to practice taking actions, receiving feedback, and iterating inside realistic digital workspaces. That is what Deeptune provides.

Deeptune's raise reflects a broader shift in the AI industry away from training models exclusively on static web-scale data and toward running large-scale reinforcement learning in synthetic and interactive environments. This direction is visible in recent agentic RL work on tool-use agents at companies like Microsoft and OpenAI.

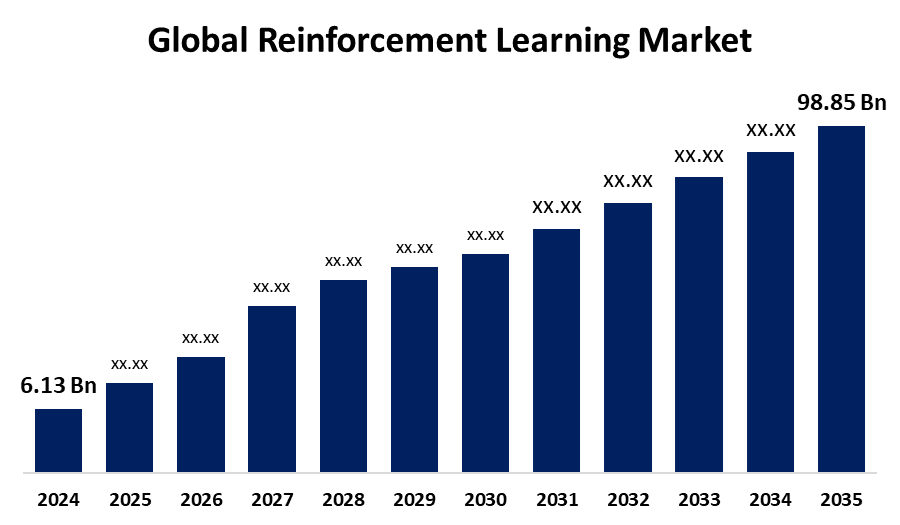

The global reinforcement learning market, which includes tools and environments, is projected to grow from approximately $11.6 billion in 2025 to more than $90 billion by 2034, according to ResearchAndMarkets. Major AI labs are reportedly considering spending more than a billion dollars on RL environments, and data-labeling incumbents are racing to build their own competing offerings.

Several prominent voices in the AI community, including Marc Andreessen, have warned that AI companies are running out of high-quality human data for training. Studies project that public web data suitable for model training could be exhausted within the next decade. Deeptune positions its simulated workspaces as a solution to this constraint, offering a way to generate rich, task-specific training experience by having models practice inside realistic enterprise environments rather than relying on scraping more of the public internet.

Andreessen Horowitz led the round, with Marco Mascorro, a partner at the firm, pointing to Deeptune as a platform that enables a fundamental shift in how AI models learn. Rather than depending primarily on human-annotated data, models trained in Deeptune environments learn through interaction, taking actions and receiving rewards in dynamic settings.

The investor syndicate also includes 776 (Alexis Ohanian's fund), Abstract Ventures, and Inspired Capital, all of which had previously backed the company at the seed stage. The continuity of that investor base signals strong conviction in both the team and the market opportunity.

According to Lupo, Deeptune was the first company to build this type of environment over a year ago, and the results have validated the approach. The company says its environments have already contributed to recent advances in AI agents' ability to perform multi-step workflows on real software, moving beyond simple question answering to complex computer use. In Lupo's view, anything that can be distilled into an environment, from editing a video to building a leveraged buyout model in Excel, is something AI can eventually learn.

Deeptune is a roughly 20-person team based in New York City, and the company has made its location a deliberate part of its recruiting pitch. The team includes engineers and operators from Anthropic, Scale AI, Palantir, Hebbia, Glean, and Retool.

Lupo has framed New York as a competitive advantage for hiring. For engineers who want to work on frontier AI or AGI problems but prefer to be in New York rather than San Francisco, Deeptune is one of only a handful of options and likely the only early-stage one. That positioning has helped the company attract talent that might otherwise default to larger labs on the West Coast.

This is the type of recruiting challenge that Fonzi is built to support. Connecting early-stage, venture-backed AI startups with top software engineers in New York City is core to what we do. Deeptune exemplifies the kind of company in our network: technically ambitious, well-funded, and competing for the same caliber of engineer as companies ten times its size.

Deeptune's Series A is a signal that reinforcement learning environments are moving from research curiosity to production infrastructure. As AI agents become more capable and enterprises begin deploying them into real workflows, the need for high-fidelity training environments will only grow. Deeptune is building the foundational layer that makes that deployment possible.

We are proud to count Deeptune among Fonzi's customers and excited to see them continue scaling at the frontier of AI agent development.

If you are building an engineering team in New York and competing for top AI talent, Fonzi connects you with software engineers in as little as two weeks.